PJAIT will PJAIT participate in Women in Tech!

Mark Zuckerberg - CEO of Meta, has announced significant changes in the moderation of content published on Facebook, Instagram or Threads. The platform would basically like to abandon official labeling of fake news, verified by external fact-checkers, in favor of community notes, a solution already implemented on X.com by Elon Musk.

In a several-minute Facebook video, Mark Zuckerberg argued that the change is aimed at reducing errors in moderation and in the improper labeling of published content as false - which many users described as censorship. In addition, the changes in moderation are expected to simplify the rules of the platform's social media and restore freedom of speech on them.

This sounds convincing - on the one hand admitting mistakes and censoring content - and on the other hand granting the community significant influence over the interpretation of "freedom of speech" by independently evaluating and adding context to published content on Meta's platforms.

What exactly does this mean? In terms of Facebook's continued participation in content moderation, this one will be marked by a relaxation of restrictions on certain topics, such as immigration and gender identity, and a more neutral approach on political and social issues.

Instead, it will focus more on checking for violations of the law, such as terrorism, child sexual abuse, drugs, fraud and scams - such as the well-known and troublesome advertisements in the Polish part of Facebook for fake investments and phishing for login credentials.

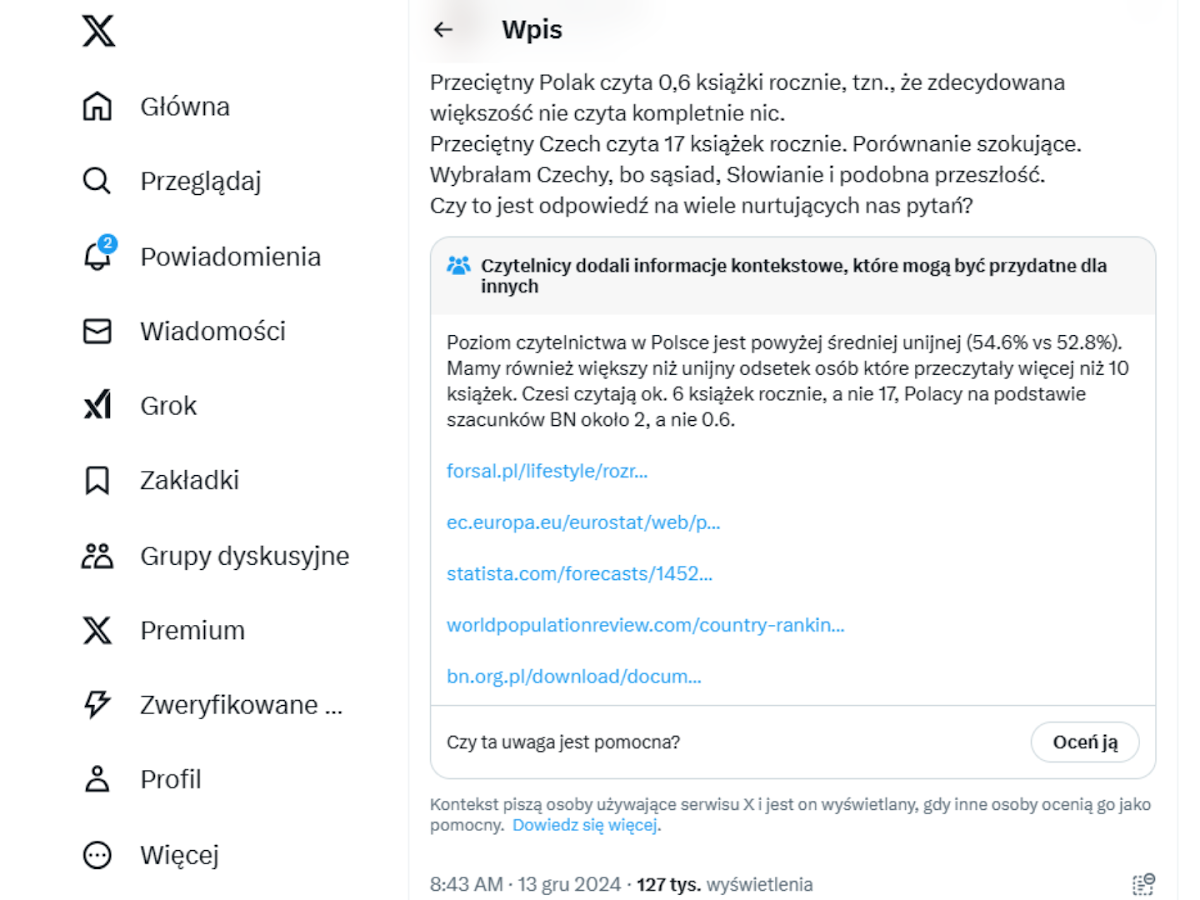

In turn, the community will be given the option to add context to published content, allowing users to mark it as suspicious, and if enough people agree with that assessment, the note will be visible to all.

How exactly does this work? Just take a look at a similar solution applied to Elon Musk's platform, X.com.

If someone publishes controversial content, and a sufficient number of people report it as a misrepresentation and back it up with credible information - such a note (context) appears directly below such a post.

This seems to be a very good solution, from the observed posts tagged in this way or even the above example shows that it works great. It's easy to imagine that this and its like millions of other posts wouldn't have caught the attention of fact-checkers. On the other hand, the community itself catches them perfectly, enriching them with factual and truthful information.

Of course, this will only work as an additional (supporting) solution to the overall moderation, which I hope the Meta platform will not abandon altogether, but will only take care to balance protection from harmful content and ensuring the freedom of expression of users.

How and whether these changes will affect the fight against misinformation will be shown in the coming months, when Meta plans to gradually implement them in its services. Fortunately, these are not irreversible changes, so if they don't have the intended positive effect, they can be modified on an ongoing basis after all.

Contact the Recruitment Department to get answers to all your questions.

rekrutacja@pja.edu.pl